The greatest threat posed by artificial intelligence is not the technology itself. It is the widespread failure to question the direction in which society is being taken.

Inaction – or passive acceptance – is still a choice. And right now, society is choosing to welcome every new form of AI, and every narrative that accompanies it, without scrutiny, challenge, or the responsible oversight that should exist on behalf of the public.

Because of this, only the part of the story that sounds good is visible. The rest is ignored, dismissed, or assumed to be impossible.

Yet inevitability is not a fact. It is a story – one that has been repeated so often that it feels like truth.

Before exploring a healthier, human‑centred approach to AI, it is important to acknowledge something uncomfortable: the warnings and “scare stories” are not entirely wrong. They could become real if the current trajectory continues. Job losses, surveillance capitalism, social credit systems, and the ability for authorities or corporations to monitor, restrict, or condition everyday life are no longer distant possibilities. They are emerging realities.

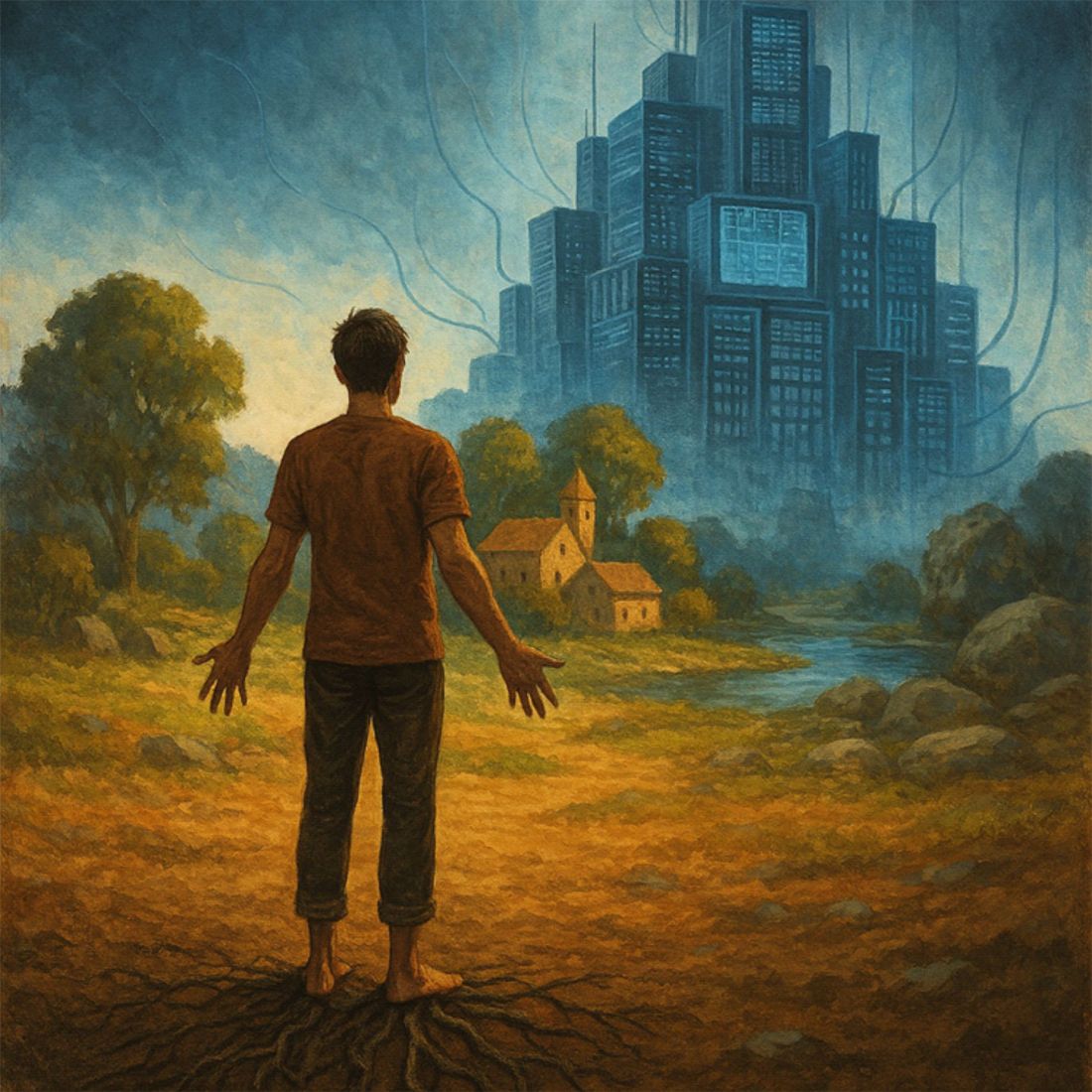

If left unchecked, society could drift into a world where freedoms – physical, economic, and even cognitive – are constrained by digital systems that ordinary people do not control. A world where what individuals buy, read, watch, eat, or even think is shaped or limited by algorithms designed to serve interests that are not their own.

At its most extreme, AI could resemble the dystopias portrayed in films like Terminator. Not because AI is inherently malicious, but because the motives driving its development today are rooted in profit, power, and control – the same flawed incentives that already distort economic and political systems.

AI is not evil. But the forces shaping it often act without the moral responsibility, empathy, or long‑term thinking that such powerful tools demand.

They are supported by institutions that have drifted far from the responsibilities they are meant to uphold.

As a result, the guardrails, safeguards, and “dead man’s switches” that should have been built into these technologies from the beginning simply aren’t there.

Under a different approach, none of this would be inevitable.

Jobs would not be threatened.

People would be prioritised above profit.

Technology would remain under local, human control – never remotely overridden, never given ultimate authority over human decisions.

But to understand why this alternative feels unrealistic, a deeper truth must be confronted – one that goes far beyond AI.

Why Change Feels Impossible

The system society lives within today depends on people believing that alternatives are impossible. It depends on the assumption that the digital world is the “future”, that physical experience is outdated, that human sovereignty is negotiable, and that the only meaningful actions are those that fit neatly inside the structures already in place.

This conditioning is not new.

It is the same mindset that discourages questioning the role of money, the pursuit of endless growth, or the economic assumptions inherited without consent.

It is the same mindset that responds to new ideas with “that wouldn’t work”, not because the ideas are flawed, but because the system has taught people to believe that nothing outside its boundaries is realistic.

Now, this same psychological trap is being applied to AI.

The public is encouraged to see AI as unstoppable, unquestionable, and unquestionably beneficial. Resistance is framed as futile. Questioning is framed as naïve. The only “sensible” response, it is implied, is to adapt human life to the technology rather than shaping the technology to serve human life.

But this is not inevitability.

This is learned helplessness – a belief engineered by a system that benefits from centralisation, dependency, and digital control.

The Human Future Is Physical, Not Digital

Human beings are not digital creatures.

They are physical, relational, meaning‑seeking beings. Wellbeing, identity, and purpose come from the physical world – from relationships, contribution, community, and agency.

Digital tools can support these things, but they cannot replace them.

When AI is used to replace physical experience, human judgement, or human contribution, it becomes harmful.

When it is used to support these things, it becomes a force for good.

The difference is not technological. It is philosophical. It is about who the technology serves – and who it is allowed to control.

Human Sovereignty Must Remain a Foundational Principle, Not a Variable to be Optimised

A human‑centred approach to AI begins with a simple principle:

Human sovereignty must remain a foundational principle of any responsible approach to AI.

A human‑centred approach begins with the recognition that AI exists to support human judgement, human values, and human experience – not to replace or override them.

When systems are allowed to condition behaviour, arbitrate choices, or quietly close off alternatives, human agency is diminished, even if efficiency appears to increase.

This is not simply a technical concern. It is a constitutional one. Societies that delegate meaningful decisions to systems they cannot collectively understand, contest, or control risk allowing tools to become authorities, and optimisation to quietly replace consent.

For AI to serve human flourishing, it must remain subordinate to human decision‑making and grounded in the physical and social realities of human life.

Any system that becomes a gatekeeper of freedom, access, or participation ceases to be a neutral tool and instead reshapes the conditions of sovereignty itself.

Actions Speak Louder Than Digital Words

Change will not come from thinking alone.

It will not come from digital debate, symbolic gestures, or waiting for someone else to act.

Change happens when people behave differently – physically, locally, and together. When they stop acting as if the system is unchangeable. When they stop accepting inevitability as truth. When they stop believing that alternatives are impossible.

The future of AI will not be determined by technology.

It will be determined by whether people rediscover their own agency.

Stepping Back Requires Stepping Out of the Story

A different relationship with AI is possible.

A different direction is possible.

A different future is possible.

But only if society first recognises that the barriers to change are psychological, not technological. The limits that feel immovable are not real limits. They are inherited assumptions – and assumptions can be questioned, challenged, and replaced.

A human‑centred future is unlikely to emerge from the system as it currently exists – without deliberate rupture, refusal or redesign. It will emerge from people rediscovering their own sovereignty, their own capacity for contribution, and their own ability to shape the world around them – physically, not digitally.

AI is not the threat.

The belief that society cannot choose differently is.