What is the Human Sovereignty Charter?

The Charter is a set of principles designed to make sure people stay in charge of technology, not the other way around. It sets out clear expectations for how AI should be used in society, and what rights individuals and communities have when AI affects their lives.

It is not a technical manual. It is a human‑centred framework for fairness, dignity, and accountability in an AI‑enabled world.

Key Takeaways

1. Humans must always remain in control

AI can support decisions, but it must never replace human judgement or authority. People make final decisions — not machines.

2. AI must respect human dignity

No system should reduce people to data points or treat them as objects to be optimised.

3. You have the right to know when AI is being used

There should be no hidden systems or secret automated decisions.

4. You can challenge decisions made with AI

If an AI system affects you, you have the right to question it and get a human review.

5. Your data belongs to you

Organisations must protect your information and use only what is necessary, with clear justification.

6. Communities have rights too

AI must not harm neighbourhoods, groups, cultures, or vulnerable populations. Communities can say “no” to harmful uses.

7. AI must be transparent and accountable

Organisations must be able to explain how their systems work and take responsibility for their impact.

8. AI cannot replace meaningful human work

Technology should support people, not push them aside or deskill entire professions.

9. Oversight must be independent

No organisation should be allowed to regulate its own AI systems without external scrutiny.

10. The Charter evolves with technology

It includes a process for updates so it stays relevant as AI develops.

Frequently Asked Questions

Is this Charter based on Asimov’s I, Robot?

No. The Charter was developed independently and is not inspired by Asimov’s work.

Asimov wrote science fiction stories about robots and their internal programming.

The Charter is a real‑world governance framework focused on human rights, community protection, and accountability.

People sometimes make the comparison because Asimov is culturally associated with “rules for robots,” but the Charter is about protecting people, not programming machines.

Why do we need a Charter for AI?

AI is increasingly used in decisions about:

- jobs

- healthcare

- education

- policing

- public services

Without clear rules, these systems can become unfair, intrusive, or harmful.

The Charter provides a principled foundation to prevent misuse and protect human dignity.

Who is the Charter for?

It is designed for:

- citizens

- communities

- workers

- public institutions

- policymakers

- technologists

- educators

Anyone affected by AI — which increasingly means everyone — can use it.

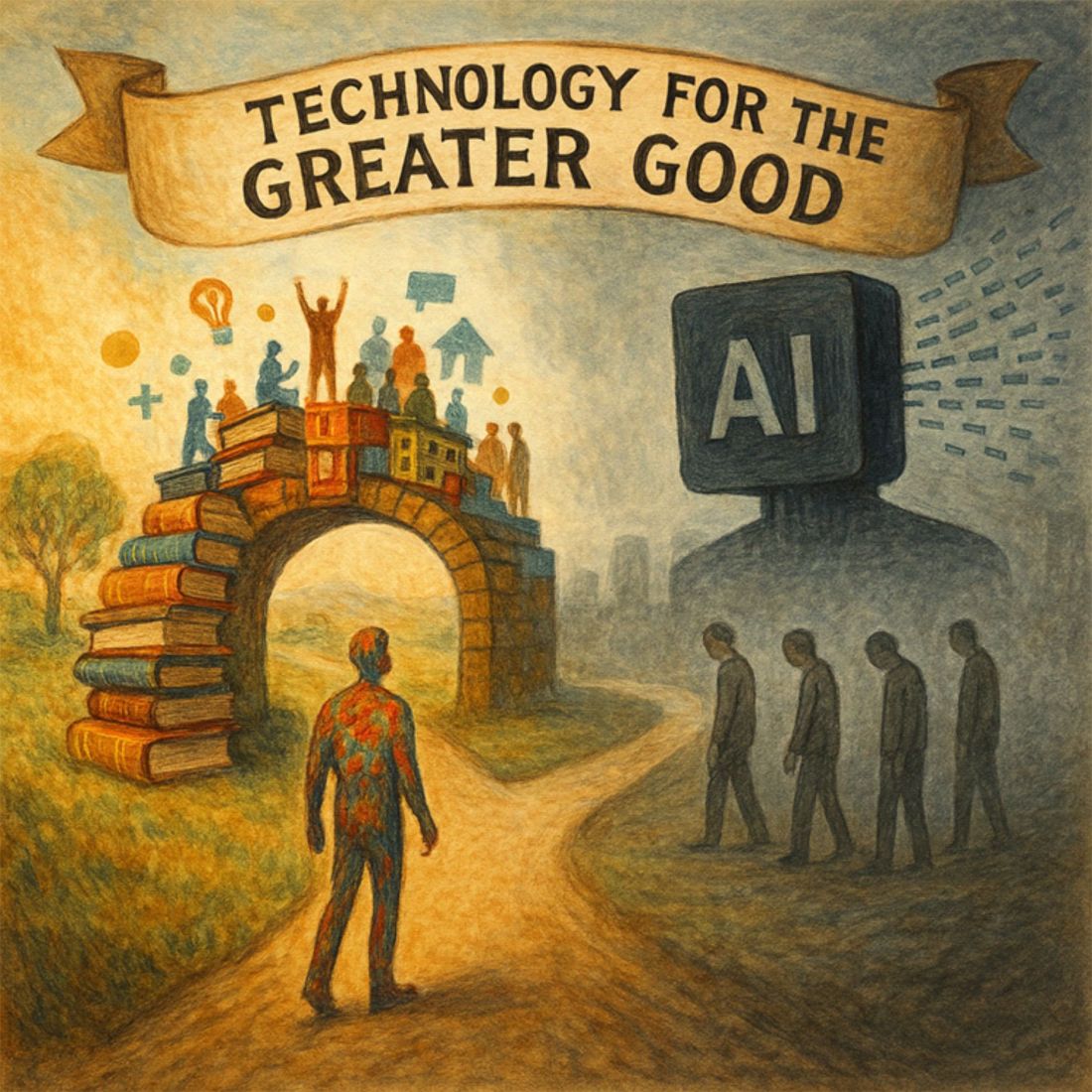

Does the Charter oppose AI?

No. It supports responsible, human‑centred use of AI.

It opposes:

- replacing human judgement

- unnecessary automation

- unaccountable systems

- harmful or opaque uses of technology

The Charter encourages innovation that strengthens society rather than undermining it.

Does the Charter have legal force?

Not automatically.

It is designed to be:

- voluntarily adopted

- used as a governance framework

- referenced in policy development

- a foundation for future legislation

It gives organisations a clear, principled way to use AI responsibly.

How does the Charter protect communities?

It recognises that AI affects groups as well as individuals.

Communities have the right to:

- reject harmful technologies

- demand transparency

- expect fairness

- protect cultural, social, and economic wellbeing

This is a major difference from most AI frameworks, which focus only on individuals.

How does the Charter protect workers?

It states clearly that AI must not:

- replace meaningful human work

- deskill professions

- remove human expertise

- centralise power in ways that harm workers

AI should support people, not make them redundant.

How does the Charter protect personal data?

It requires:

- data minimisation

- clear justification for data use

- strong safeguards

- transparency

- accountability

Your data should never be used in ways that harm you or your community.

What makes this Charter different from other AI ethics guidelines?

Most AI guidelines focus on:

- technical safety

- risk management

- responsible innovation

The Human Sovereignty Charter focuses on:

- human rights

- community protection

- sovereignty and dignity

- limits on automation

- preserving human judgement

It is a constitutional‑style document, not a corporate ethics checklist.

In one sentence

The Human Sovereignty Charter ensures that AI serves humanity — never the other way around.

To Read The Charter

The Human Sovereignty Charter for Artificial Intelligence can be read in full, online without charge HERE:

To pay to download a copy for Kindle, please follow this link HERE: